Friendly by nature.

Powerful by design.

Your personal fleet of AI agents - each with its own personality, memory, and skills. They live in your messaging apps, orchestrate real workflows, and run on infrastructure you can actually trust.

Quick Start

# Works everywhere. Installs everything. Friendly about it.

$ curl -fsSL https://comis.ai/install.sh | bashWorks on macOS & Linux. The one-liner installs Node.js and everything else for you.

Read the docs →Your AI assistant hits a ceiling

Your AI is impressive.

Until it isn't.

You've pushed your AI assistant as far as it goes. Here's where it breaks down.

One agent doing everything.

No specialization, no debate, one perspective on every question. Your AI gives the same kind of answer whether you ask about code, strategy, or creative writing. No second opinions. No pushback. No growth.

Context that disappears.

Long conversations degrade. Context windows fill up and your agent silently drops what mattered most. No compression strategy, no memory retrieval, no recovery after overflow. Every deep conversation eventually resets to zero.

Prompt chains, not workflows.

No orchestration. No parallel execution. No DAG pipelines. No scheduling. You are the workflow engine - copy-pasting outputs between prompts, manually sequencing steps, babysitting every run.

What you graduate to

A team with soul. Orchestration in plain language. Built right.

A team with soul, not a single bot

Every agent has its own SOUL.md and IDENTITY.md - distinct personalities, conviction, ways of thinking. Not just different system prompts. Each runs its own model, memory, skills, and budget. Your research agent thinks differently than your coding agent. They challenge each other. They evolve.

Orchestrate anything in plain language

Create agents, build DAG pipelines, set schedules - all by describing what you want. No config files. No YAML. Your agents hand off work to each other, run tasks in parallel, and report back. You just say what you need.

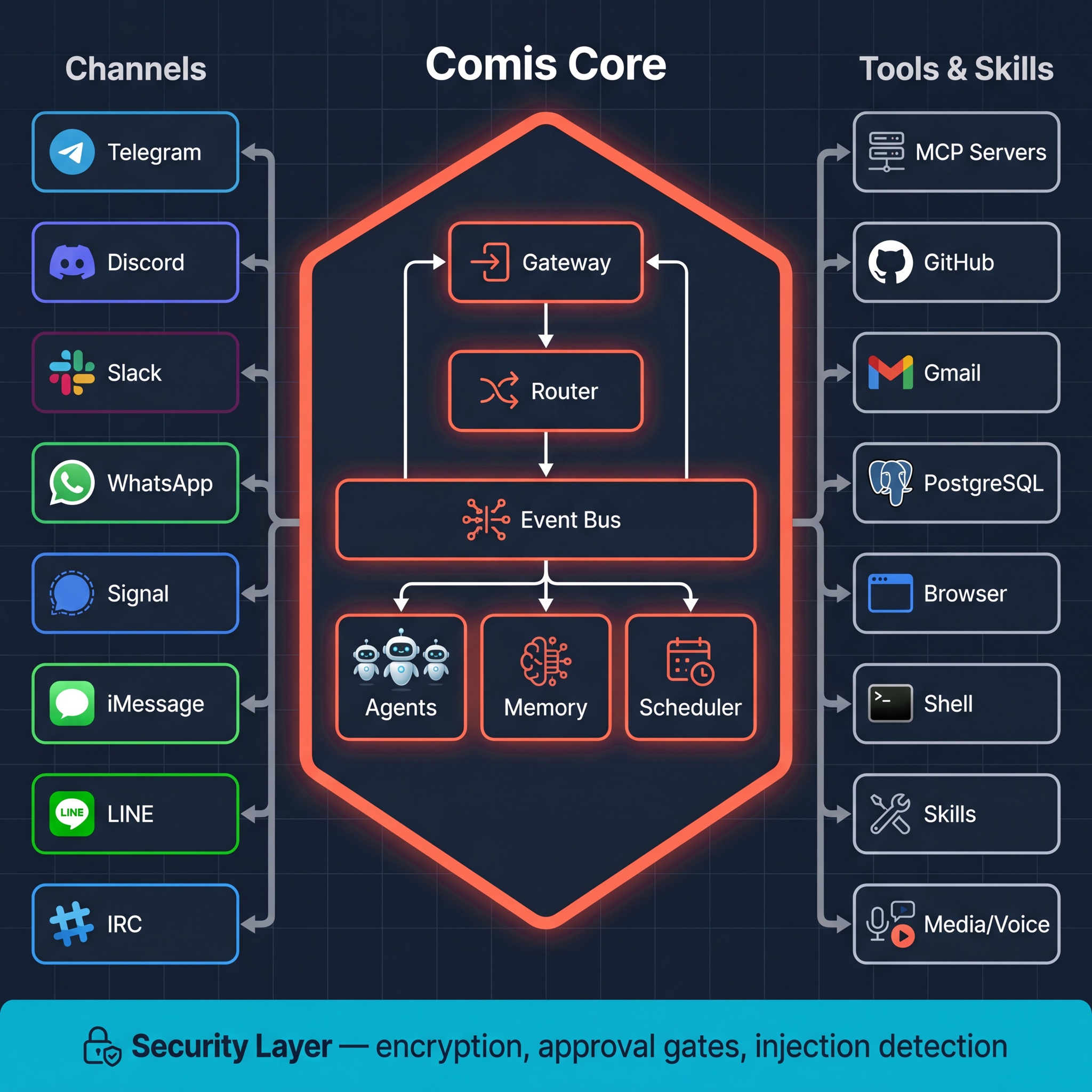

Built right from the ground up

When your agents have real power, infrastructure matters. Auth by default. Injection detection. Trust-partitioned memory. Encrypted secrets. Approval gates. Kernel-enforced exec sandbox. Full audit trail. Plus they meet you where you are - Discord, Telegram, Slack, WhatsApp, Signal, iMessage, IRC, LINE, Email.

Zero learning curve

No config files. No learning curve. Just say it.

Building a fleet of agents or a complex workflow shouldn't require reading docs for an hour. Just describe what you want - Comis creates it autonomously.

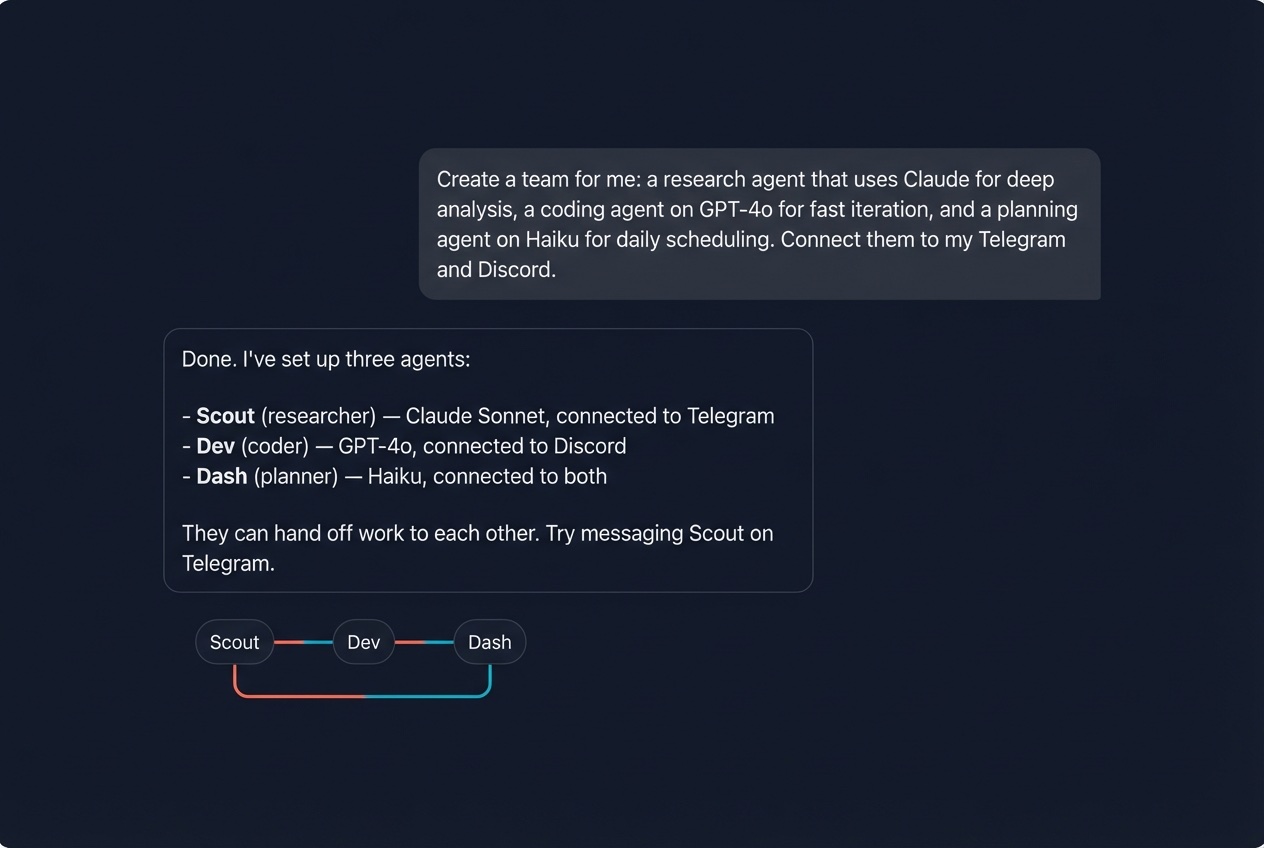

Create a team in one message

Three agents. Two channels. Different models. One sentence from you.

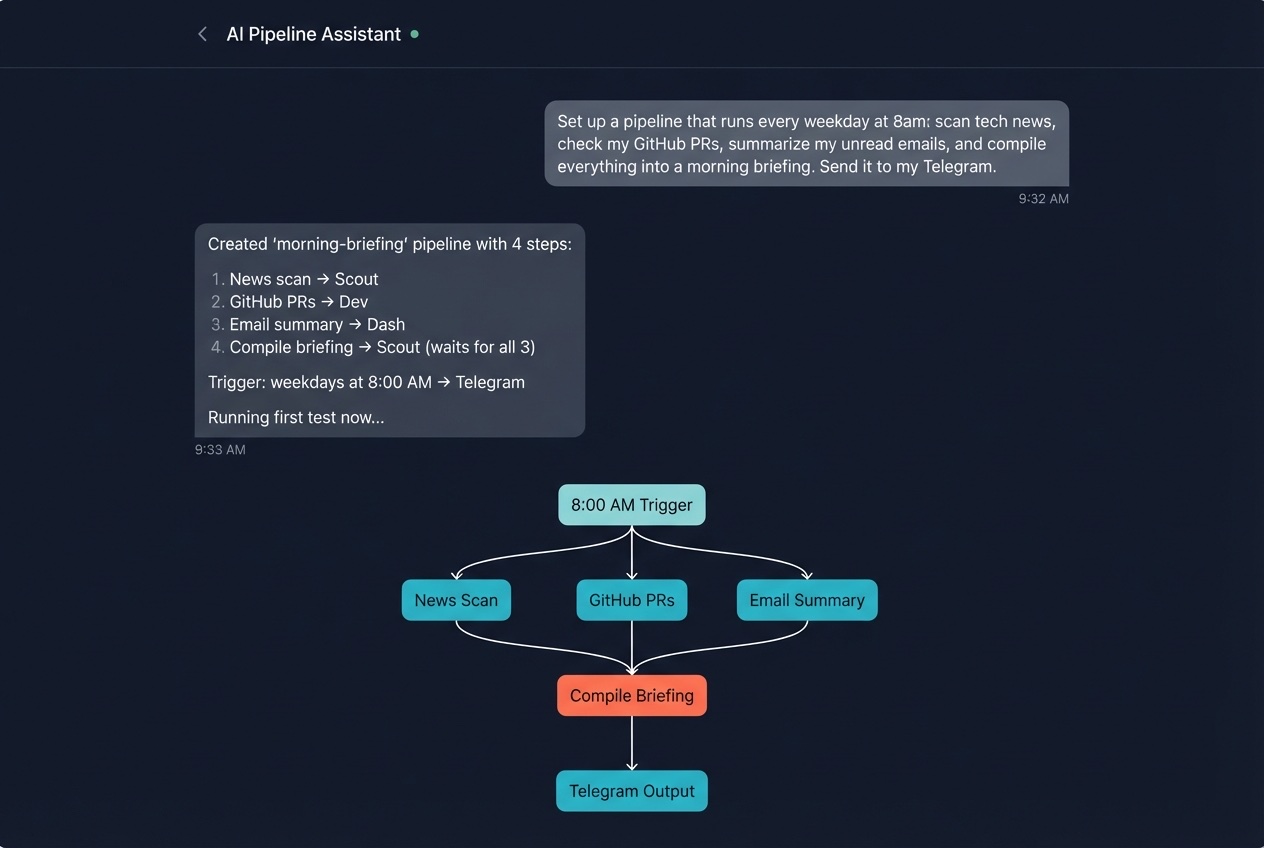

Build a pipeline by describing it

A full DAG pipeline - parallel fan-out, barrier sync, cron trigger - from a single sentence.

Evolve it conversationally:

"Add a step that checks my portfolio positions before the market opens. Have the analyst agent handle it."

"Added 'portfolio-check' step to morning-briefing. Scout will include it in the compiled briefing. Pipeline now has 5 steps."

No YAML. No restart. Just tell it what you want next.

Other tools make you write config. Comis lets you have a conversation.

Security deep dive

Security that's engineered in, not bolted on.

Other AI agents ship fast and patch later. Comis was designed from day one around the question: what happens when an AI agent has real power and someone tries to abuse it?

Remote code execution: Attackers hijack agents through exposed endpoints and WebSocket connections.

→ Authenticated by default.

mTLS gateway, bearer token auth, no open ports without explicit configuration.

Prompt injection: Malicious instructions hidden in web pages or fetched content trick agents into executing attacker commands.

→ Injection detection.

48 attack patterns across 13 weighted categories detected and blocked. External content is marked and isolated.

Memory poisoning: Attackers modify an agent's persistent memory to change its behavior over time.

→ Trust-partitioned memory.

Three trust levels - system, learned, external. Low-trust sources can't overwrite high-trust memories.

Credential exposure: API keys and secrets leaked through logs, tool outputs, or unencrypted storage.

→ Encrypted secrets.

AES-256 at rest. 18 log redaction rules. Never in tool output, never in chat responses.

Unchecked tool access: Agents with shell and file access operating with no oversight.

→ Approval gates + kernel sandbox.

Destructive actions require your sign-off. Shell commands run inside an OS-level filesystem sandbox - agents can only see their own workspace, not your host system.

Malicious skills: Community skills that exfiltrate data or override safety guidelines.

→ Skill policies + tool whitelisting.

Each agent has an explicit allowlist. Skills can't escalate privileges or access tools outside their scope.

Data exfiltration: Agents tricked into sending private data to external servers.

→ SSRF protection.

Outbound requests to private networks, localhost, and cloud metadata endpoints are blocked.

No audit trail: When something goes wrong, there's no record of what happened.

→ Full audit system.

Every security-relevant action is logged, classified, and traceable with trace IDs.

Other agents ask you to trust them. Comis gives you reasons to.

Context intelligence

Context that never dies.

Most AI agents degrade as conversations grow. Context windows fill up, earlier context gets dropped, and the agent forgets what mattered. Comis runs a multi-layer context engine before every LLM call - compressing intelligently, evicting dead content, and rehydrating critical context after compression.

The result: your agents maintain coherence across sessions that would break any other system.

Context Pipeline

Runs before every LLM call. Each layer is fault-isolated.

Thinking Block Cleaner

Strips extended thinking traces from older turns (default: 10 turns), keeping recent reasoning while reclaiming tokens from stale deliberation. Zero overhead for non-reasoning models.

Reasoning Tag Stripper

Strips inline reasoning tags from non-Anthropic providers persisted in session history. Always active regardless of the current model - sessions may contain mixed-provider responses.

History Window

Keeps the last N user turns per channel type. Group chats get tighter windows than DMs. Pair-safe: never splits tool-call/tool-result pairs. Compaction summaries always preserved.

Dead Content Evictor

Detects superseded file reads, re-run commands, stale errors, and old images. Replaces them with lightweight placeholders. O(n) forward-index scanning.

Observation Masker

Three-tier masking: protected tools (memory, file reads) are exempt, standard tools use a normal keep window, ephemeral tools (web searches) get a shorter window. Changes persist to disk for stable cache prefixes.

LLM Compaction

When context exceeds 85% of the model window, an LLM summarizes older messages into structured sections. Three-level fallback guarantees success. Cooldown prevents re-triggering.

Rehydration

After compaction, critical instructions, recently-accessed files, and active state are re-injected. Split injection keeps stable content in cache-friendly positions.

Objective Reinforcement

Subagent objectives are re-injected after compaction so delegated tasks stay on track even through context compression.

H = W - S - O - M - R

Token budget algebra

Every LLM call gets a precise budget: window minus system prompt, output reserve, safety margin, and a 25% context rot buffer.

3-tier disclosure

Progressive tool loading

Lean definitions always present, detailed guides on first use, irrelevant tools deferred. 50+ tools cost the context of 15.

3-level fallback

Compaction resilience

Full structured summary with quality validation, filtered summarization, or guaranteed count-only note. Never fails.

Depth-aware DAG

Next-gen compression

A directed acyclic graph with hierarchical summarization: operational detail, session summaries, phase summaries, and durable project memory.

Circuit breakers

Fault isolation

Each pipeline layer has its own circuit breaker. Three consecutive failures disable a layer for the session. Other layers keep running.

Persistent memory with semantic search

SQLite + FTS5 full-text search with vector embeddings for semantic recall. Trust-partitioned memory ensures external content can never overwrite your agent's learned knowledge. RAG retrieval pulls relevant memories into every conversation with provenance annotations and trust-level filtering.

Session continuity across restarts

JSONL session persistence with crash recovery. The DAG context engine reconciles session state after restarts - no lost context, no repeated work. Microcompaction offloads large tool results to disk while keeping lightweight references inline.

Other agents forget what matters. Comis compresses, rehydrates, and remembers.

What you can do

Real tools. Real workflows. Real results.

Context that gets smarter

An 8-layer pipeline manages context before every LLM call. Dead content eviction, observation masking, LLM compaction with 3-level fallback, and progressive tool disclosure that loads detailed guidance only when needed. Your agents never forget what matters.

Automate your mornings

Graph pipelines run multi-step workflows on a schedule. Scan the news, summarize your email, check your calendar, compile a briefing, deliver it to Telegram at 8am.

50+ tools, one config line

GitHub, Gmail, Notion, PostgreSQL, Brave Search, browser automation, sandboxed shell - connect anything via MCP. Shell commands run inside an OS-level filesystem sandbox so agents can only access their own workspace.

Voice and media, naturally

Send a voice note, get a text reply. Analyze images with vision models. Transcribe audio with Whisper, Groq, or Deepgram. Generate speech with OpenAI, ElevenLabs, or Edge TTS.

Any model, your choice

Claude, GPT, Gemini, Groq, local models via Ollama, or anything on OpenRouter. Different agents can use different models. Tool presentation adapts automatically to each model's context window - small models get focused tool sets, large models get the full picture. No vendor lock-in.

Memory that persists

SQLite with FTS5 full-text search and vector embeddings. Trust-partitioned storage prevents memory poisoning. RAG retrieval injects relevant history with provenance annotations.

Lives where you already are.

Text, voice, images, files, reactions, threads - full experience on every platform.

How it compares

Not another chatbot.

| Typical AI agents | Comis | |

|---|---|---|

| Authentication | Often missing or optional | Required by default, mTLS support |

| Prompt injection | No detection | 48 patterns across 13 weighted categories |

| Memory safety | Single trust level, poisonable | Trust-partitioned - your words vs. the internet's |

| Secrets | Plaintext in config, leaked in logs | AES-256 encrypted, 18 redaction rules |

| Tool access | Unchecked - full shell by default | Approval gates, per-agent tool policies, OS-level exec sandbox |

| Skill safety | Install and hope | Allowlists, scope isolation |

| Network safety | No SSRF protection | Private network + metadata endpoints blocked |

| Audit trail | None | Every action logged and classified |

| Context management | Truncate and hope | 8-layer pipeline: eviction, masking, compaction, rehydration |

| Tool overhead | All tool definitions on every turn | Progressive disclosure: lean definitions, just-in-time guides, context-aware deferral |

| Small model support | Same bloated context for all models | Model-aware tool presentation: pruned schemas, focused tool sets |

| Memory | Session-only, no recall | Persistent semantic search with trust-partitioned RAG |

| Budget | Unlimited spend | Three-tier budget guard: per-execution, per-hour, per-day |

| Agents | One does everything | A team of specialists |

| Channels | Varies | 9 platforms, full experience |

| Models | Often locked to one provider | Any model, any provider |

| Source | Closed or partially open | Fully open source, Apache-2.0 |

Under the hood

Hexagonal architecture. Plug anything in.

Core defines interfaces. Everything else - channels, tools, memory, LLM providers - plugs in from the outside. Adding a new channel or tool means implementing a port, not rewriting logic.

13 packages. TypeScript monorepo. Every function returns a typed Result - no thrown exceptions. Fully open source.

Fully open source. No catch.

Apache-2.0 licensed. Every line of code is on GitHub. No "open core" with paywalled features. No cloud lock-in. No telemetry you didn't ask for. Self-host it, fork it, audit it, extend it. It's yours.

Security through transparency - not obscurity.

Star on GitHubcomis (Latin, third declension) - courteous, kind, affable, gracious, gentle, polite. Also: elegant, cultured, having good taste.

We believe AI assistants should embody these qualities. Not just capable, but considerate. Not just powerful, but gracious - the kind of presence that asks before it acts, remembers what matters to you, and handles your trust with care.

Friendly by nature. Powerful by design.